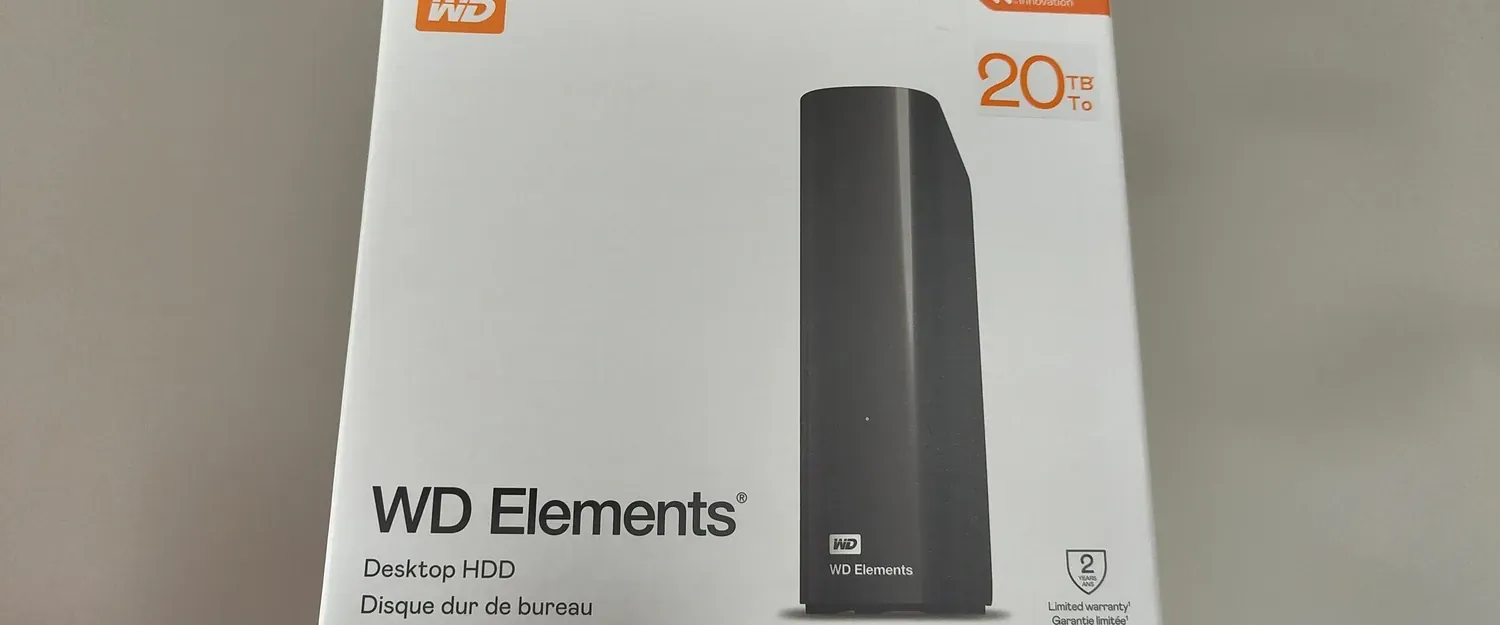

Almost four years ago, I bought a set of 14TB WD Elements . They were the cheapest HDD option on the market at the time, with quite good disks. I still own them today, and they work in my main PC, thanks to the tape patch. Time flies, and the space on my NAS has been filling up-it’s almost full now. I had a quite old Synology DS213j, and it’s time to replace its 8TB drives with something more substantial.

Let’s see what’s in the package this time.

sudo smartctl -a /dev/sdd

smartctl 7.4 2023-08-01 r5530 [x86_64-linux-6.14.0-36-generic] (local build)

Copyright (C) 2002-23, Bruce Allen, Christian Franke, www.smartmontools.org

=== START OF INFORMATION SECTION ===

Device Model: WDC WD200EDGZ-11CNKA0

Serial Number: SZGYPV7V

LU WWN Device Id: 5 000cca 405cd80c5

Firmware Version: 85.00A85

User Capacity: 20 000 588 955 648 bytes [20,0 TB]

Sector Sizes: 512 bytes logical, 4096 bytes physical

Rotation Rate: 7200 rpm

Form Factor: 3.5 inches

Device is: Not in smartctl database 7.3/5528

ATA Version is: ACS-5 (minor revision not indicated)

SATA Version is: SATA 3.5, 6.0 Gb/s (current: 6.0 Gb/s)

Local Time is: Sat Nov 29 13:20:33 2025 CET

SMART support is: Available - device has SMART capability.

SMART support is: Enabled

Let’s see how it performs:

Average read/write just below 200MB/s is absolutely fine for a storage disk. It’s interesting, that my older 14TB drives had more consistent access times, but again: for data hoarding it makes no big difference.

Pre-heating

I like to stress-test new disks a bit to ensure they won’t fail early. Since I plan to shuck them, I’ll lose the warranty, so I want to make sure they don’t have any issues that might be caused by bad transportation or physical faults.

I initially used badblocks for this, but when I tried to run it, it failed:

sudo badblocks -b 4096 -wsv /dev/sdd

badblocks: Value too large for defined data type invalid end block (4882948096): must be 32-bit value

It looks like 32-bit values aren’t sufficient to count blocks 😅. I searched for other tools that could generate a similar workload and found fio. I started with the following command:

sudo fio --name=drive-test --filename=/dev/sdd --direct=1 --rw=readwrite --bs=10M --size=100% --numjobs=1

Unfortunately, the performance was terrible-barely 50MB/s. At this speed, it would take 4–6 days to complete. I tried badblocks again but modified the sector size:

sudo badblocks -b 8192 -wsv /dev/sdd

And it worked! Checking iotop, I saw it was running between 200–300 MB/s. It will still take more than a day to overwrite the drive, but that’s reasonable.

Shucking

Tip

Best instruction on shucking those devices could be found on iFixit .

Disk won’t start in a standard PC without disabling 3V pin. Simplest way without damaging disk is to use black electric tape and patch it. More drastic is to remove pin with a knife… I’d stay with a patch 😉